13 min read

What Do You Mean I Can't Run a Script?

The laptop is the most powerful work tool ever created, and we've deliberately disabled its most powerful building block. A look at why blanket script execution bans are the new 'no formulas in Excel' — and what needs to change.

The laptop is the most powerful work tool ever created, and we've deliberately disabled its most powerful building block.

I tried to automate a Word document last month. Not hack a database. Not exfiltrate company secrets. Just auto-generate a weekly status report from data the team already had access to. Vanilla knowledge work. My AI agent wrote the script, showed me the script, explained it line by line.

Then it hit a wall:

running scripts is disabled on this system.

I stared at the screen for a while. This is a machine whose entire purpose is to execute instructions, and we have deliberately told it not to execute instructions.

Imagine buying a car and having the dealership weld the hood shut. "For your safety, you can't access the engine." You'd never accept that from a car dealer. But we accept it from corporate IT every single day.

And I'm not alone in this frustration.

Gill (@gurtej__gill_) put it perfectly:

Permission is the new bottleneck.

— Gill (@gurtej__gill_) January 6, 2026

By the time access clears, the advantage is gone.

Startups ship.

Big tech waits.

Joel Grus (@joelgrus) described a justification loop that will sound painfully familiar: you request an AI coding tool and IT responds, "you already have GitHub Copilot" -- as if asking for a hammer when you already have a screwdriver is unreasonable.

at my previous company it would have been impossible to get permission, because (1) "you already have github copilot", and also (2) getting claude code through infosec / legal / procurement would have been like climbing everest

— Joel Grus 🤠 (@joelgrus) January 5, 2026

And then there's the one that made me laugh out loud. Doug Flips (@DougFlips) shared that his large company blocked all .AI domains. Every one. The team couldn't even read the OpenAI blog -- not use the tool, not run an agent, just read a blog post about what the technology does.

My large company blocked all .AI domains. Couldn’t even read the OpenAI blog.

— Doug Flips (@DougFlips) January 6, 2026

In 2025, they finally started rolling out a Microsoft Copilot trial, then switched to Amazon Q with older Claude models.

In 2025, they finally started rolling out a Microsoft Copilot trial, then switched to Amazon Q with older Claude models.

You cannot make this up.

Kirk Graham, on the Python.org forums, captured the absurdity in one devastating sentence: "Basically Python is dead at our company. APT Hackers just attacked Excel, but we can still use Excel."

Excel -- the application actively exploited by nation-state hackers -- gets a pass. Python -- the scripting language that powers nearly every AI workflow -- is banned. And 72 comments over five years on a single VS Code GitHub issue tell you this isn't an edge case. It's an industry-wide condition.

The Uncomfortable Truth About How AI Agents Actually Work

Here's what I've found after months of working with AI agents daily: all those pretty UIs everyone is building? They're solving the wrong problem.

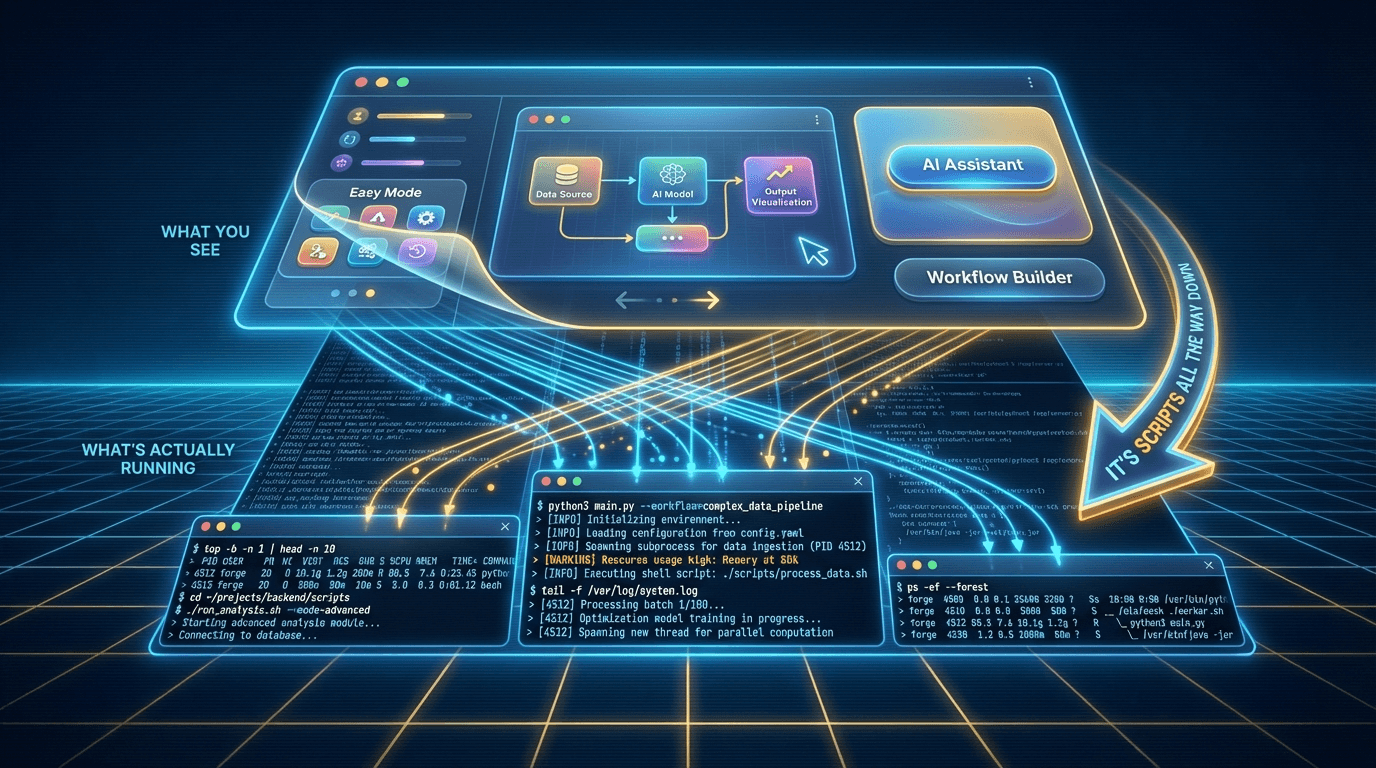

Every week I see a new "AI assistant" with a beautiful dashboard, drag-and-drop workflows, colorful buttons for pre-built prompts. They look great in demos. But they constrain what agents can do to whatever the UI designer anticipated you'd want.

At the most fundamental level, AI agents run scripts on the command line. That's the atomic unit of agent work. An agent reasons, writes a script, executes it, reads the output, decides what to do next. The chat windows and workflow builders are layers on top of that reality. And every layer removes options.

I wrote about this in my first article: CLI is all you need. The command line is the factory floor where the real work gets done. Web UIs are the observation deck. Boris Cherney, who created Claude Code at Anthropic, said it directly: "The terminal is almost like the most universal of all the interfaces in that it's flexible, and it's incorporated into everybody's workflow already."

When IT policy blocks script execution, it doesn't just inconvenience developers -- it architecturally prevents AI agents from doing their job. Multi-agent coordination, autonomous testing, headless execution -- these aren't degraded without script execution. They're impossible.

"But GUIs are more accessible," I hear you saying. "Not everyone can use a command line."

Fair -- I was terrified of the terminal two years ago. But here's what surprised me: even GUI-based AI tools -- Replit Agent, Copilot, Cursor -- need script execution running under the hood. The pretty interface is a wrapper. Underneath, scripts are executing. When IT blocks script execution, they don't just block the CLI crowd. They break the GUI tools too.

The question isn't CLI versus GUI. It's whether we let AI agents do the thing that makes them agents: execute code. 85% of developers regularly use AI coding tools (Faros AI, end of 2025). OpenAI Codex usage grew 20x since August 2025 -- over 1 million developers in the past month alone. This is not a fringe movement. This is the mainstream crashing into a policy wall.

Which brings us to the question nobody wants to ask.

The Human Accountability Problem

Here's where this gets uncomfortable -- and I'm including myself in this.

The real reason script execution stays blocked isn't purely technical. Scott Sutherland at NetSPI demonstrated that PowerShell execution policy has 15 known bypasses. As he wrote: "The execution policy was never meant to be a security control." If your security boundary has 15 holes in it, it was never a boundary -- it was a suggestion.

The deeper issue is human. We haven't built the muscle for accountability in an agent-driven world. It's easier to block everything than to learn how to monitor, evaluate, and take responsibility for what our agents produce.

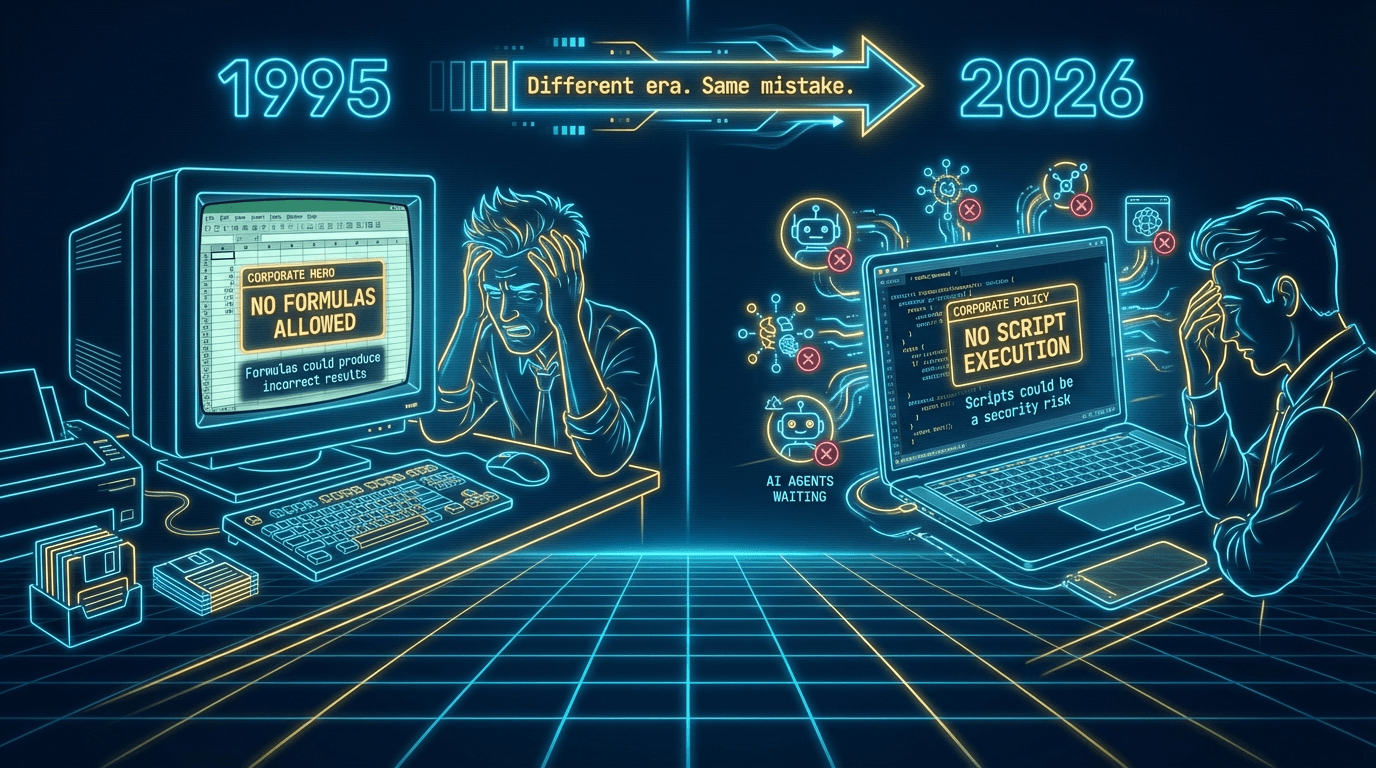

Imagine it's 1995, and IT announces: "You can use Excel, but no formulas. Formulas could produce incorrect results and we can't audit them. Data entry only." Every financial model, every budget forecast, every revenue projection -- gone. That's obviously absurd. Formulas are the entire point of a spreadsheet. But that is exactly what we're doing when we block script execution in the age of AI agents. Scripts are the formulas of this era.

Here's what I've learned the hard way: the UI crutch keeps you passive. You click "Run" and hope for the best. The CLI forces you to watch, to read the output, to notice when something looks wrong. It's uncomfortable at first -- I spent weeks feeling like I was reading a foreign language -- but that discomfort is the learning.

And the alternative? It's already happening. At Amazon, 1,500 engineers endorsed an internal forum thread pushing to adopt Claude Code -- the tool they sell to AWS customers but aren't allowed to use themselves. Anand Iyer (@ai) captured the irony perfectly:

Amazon engineers are frustrated they can't use Claude Code for production work. 1500 employees endorsed adopting it internally on a forum thread. The irony: the engineers who have to sell Claude Code to AWS customers are the ones blocked from using it themselves. "Customers will… https://t.co/yFylRK3TP2

— anand iyer (@ai) February 12, 2026

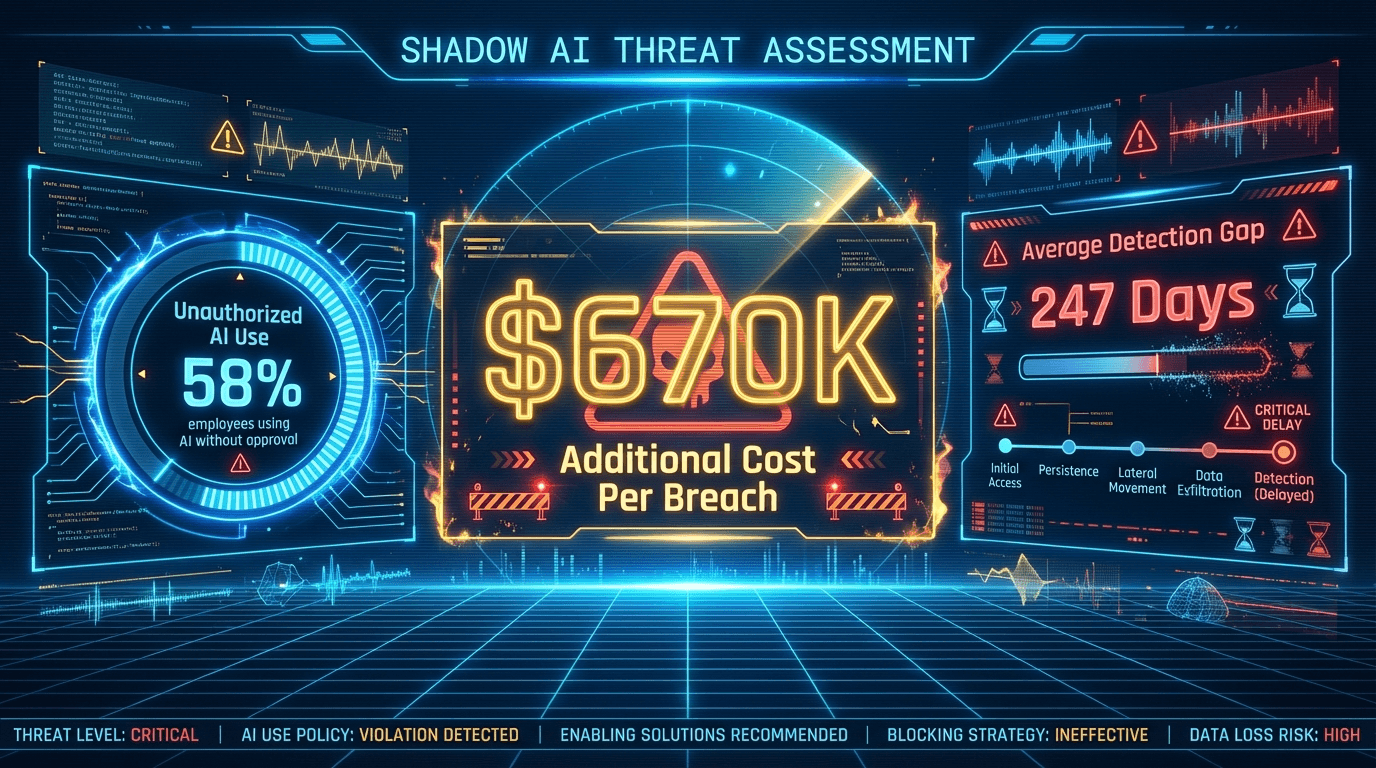

That's not security. That's shadow AI. Microsoft's 2025 Work Trend Index: 58% of employees use AI tools without employer authorization. IBM's 2025 Cost of a Data Breach report: organizations with high shadow AI activity pay $670,000 more per breach, with an average detection time of 247 days. The blocking policy didn't eliminate the risk -- it relocated it.

Shadow AI: One In Four Employees Use Unapproved AI Tools, Research Finds https://t.co/3UIVSbwk3a #cybersecurity #infosec #hacking

— The Cyber Security Hub™ (@TheCyberSecHub) October 30, 2025

As Anton Chuvakin, Security Advisor at Google Cloud, put it: "If you ban AI, you will have more shadow AI and it will be harder to control."

So here's my invitation -- because I went through this same transition myself. Get comfortable watching your agents work. Learn to read the output, even imperfectly. Take ownership of what your AI tools produce, the same way you take ownership of the spreadsheet you send to the board even though Excel did the math.

And here's the thing: a lot of the work agents will eventually handle is the stuff that's already neat and automated inside software applications today -- the data pipelines, the scheduled reports, the structured workflows. That will get fully agentified, and you won't need to watch it happen any more than you watch your email filters run. But there's a whole other category of work -- the messy stuff, the data that's inconsistently formatted, the tasks that span multiple systems, the decisions that require context no single database holds. That work won't be fully automated anytime soon. For those tasks, agents become an incredible pairing partner -- but only if you know how to work with them. The people who build that muscle now will have a compounding advantage.

The organizations racing ahead -- Shopify, where CEO Tobi Lutke (@tobi) declared "reflexive AI usage is now a baseline expectation," or Stripe, where roughly 8,500 of 10,000 employees use LLM tools daily -- aren't building cultures of agent avoidance. They're building cultures of agent accountability.

— tobi lutke (@tobi) April 7, 2025

The only question is whether you'll take the wheel -- or keep accepting that the hood stays welded shut. And if we're going to take the wheel, we need to talk about who should be building the road.

IT as Partner, Not Blocker

Can I say something without everyone getting mad? I want to be careful here, because the easiest version of this article is "IT is the enemy." That's not what I've found. The IT professionals I've talked to aren't trying to make your life harder. They're working from a playbook that made sense five years ago.

Here's the reframe that clicked for me: IT is actually in the best position to solve this problem. They understand identity management, network segmentation, audit logging -- exactly the skills you need to make AI agents run safely. The issue isn't capability -- it's posture. The default is "block." It needs to become "sandbox and audit."

A city doesn't prevent construction by banning hammers. It creates building codes. IT should be the building inspector, not the padlock.

The Cloud Security Alliance published their Agentic Trust Framework in early 2026: "No AI agent should be trusted by default. Trust must be earned through demonstrated behavior." That's not reckless permissiveness. That's zero-trust applied to agents -- the same philosophy IT already uses for network access.

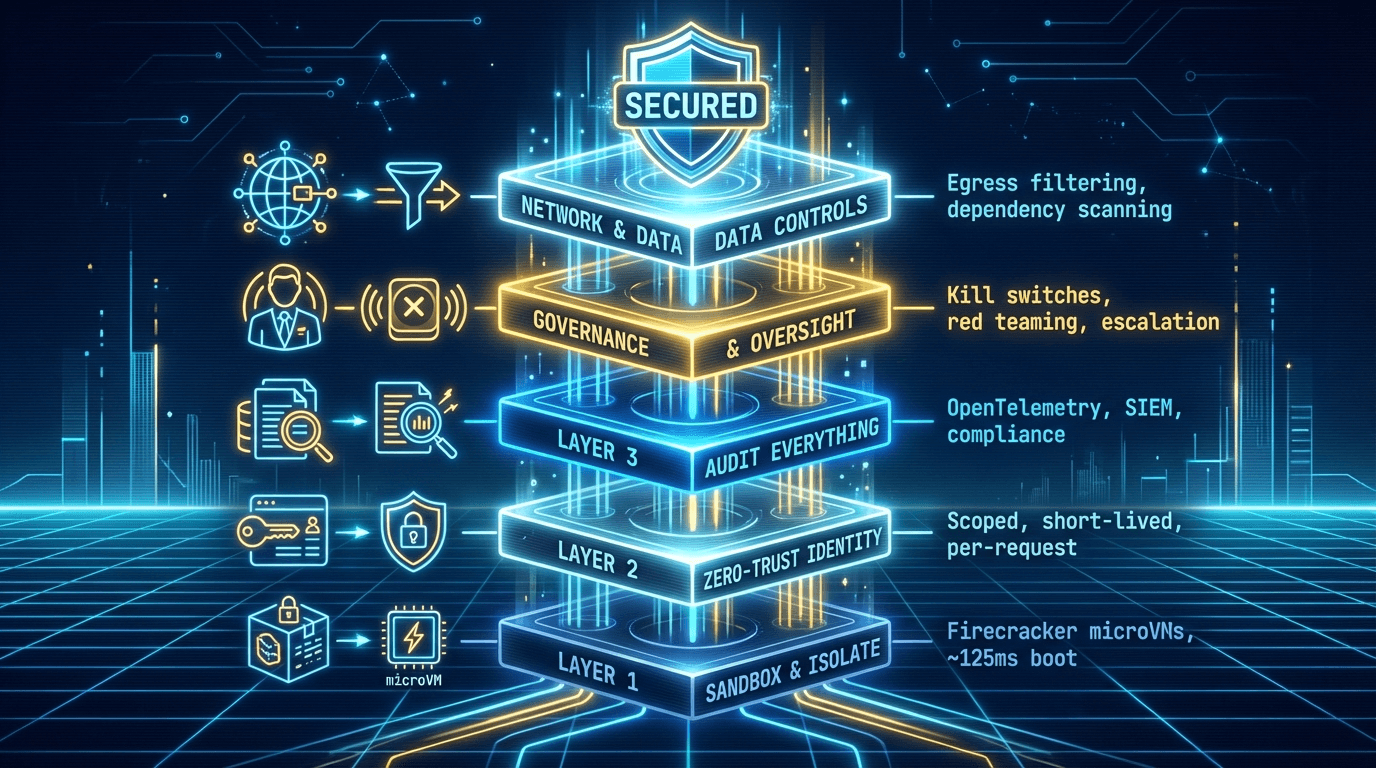

The security stack already exists:

- Sandbox and isolate. Firecracker microVMs boot in ~125 milliseconds. AWS has run every Lambda workload on Firecracker since 2018. Planetary-scale, not experimental.

- Zero-trust identity. Agents get scoped, short-lived, per-request credentials. Treat them like contractors: verified, limited, monitored.

- Audit everything. OpenTelemetry tracing, SIEM integration, compliance APIs. Every command logged and queryable.

- Governance and human oversight. Kill switches, red teaming, escalation policies. Humans set the boundaries; agents operate within them.

- Network and data controls. Egress filtering, dependency scanning, output review. Sandboxing alone doesn't prevent data exfiltration -- network controls are an essential layer.

The progressive IT leaders getting this right aren't saying "yes to everything." They're saying what Farhan Thawar, VP of Engineering at Shopify, said: "Hey, we're likely going to do this. How can we do it safely?" From gatekeeper to architect of digital trust.

Still, even the best policy change only gets you halfway there. Because once you unlock script execution, you run into a second wall I wasn't expecting.

The Second Unlock: Virtual Compute Per Employee

Solving the script execution policy is necessary but not sufficient. Even if IT flips the switch tomorrow, you hit a second wall: the laptop itself can't keep up.

The legacy mental model treats virtual compute as something reserved for production systems -- servers, databases, automated build pipelines. Everyone else gets a laptop and Outlook. But here's what surprised me: every company I found that had truly unlocked AI agent productivity had arrived at the same conclusion -- every knowledge worker benefits from a dedicated compute environment.

The examples were more widespread than I expected. Shopify built Spin, their own cloud dev environment on Kubernetes and GCP. Stripe gives every engineer a dedicated EC2 "devbox." GitLab -- 2,375 employees across 65+ countries, zero offices -- built HackyStack for self-service provisioning. Uber has DevPods (48-core containers, 2.5x faster builds). Slack hit 90% developer adoption of remote dev environments within three months.

"But those are all developer-focused engineering companies." Fair counterargument. But General Motors' Director of Global IT, Michael Anderson, said of their Microsoft Dev Box deployment: "It takes weeks for a developer to get a laptop. Utilizing Dev Box, I can spin up a dev environment in minutes." DZ BANK -- regulated German banking -- cut onboarding from one week to one day. SOM, an architecture firm, replaced $20,000 workstations with cloud compute. This isn't just a Silicon Valley pattern.

Then there's the grassroots version. Karl Hughes documented running a full dev environment on a $200 Chromebook plus a $40/month DigitalOcean droplet. People are solving this themselves because their organizations won't.

"Hasn't VDI failed before?" Yes, honestly. But cloud dev environments -- Codespaces, Dev Box, Spin -- are ephemeral, project-specific, and purpose-built. Not generic remote desktops. Sandboxes designed for work.

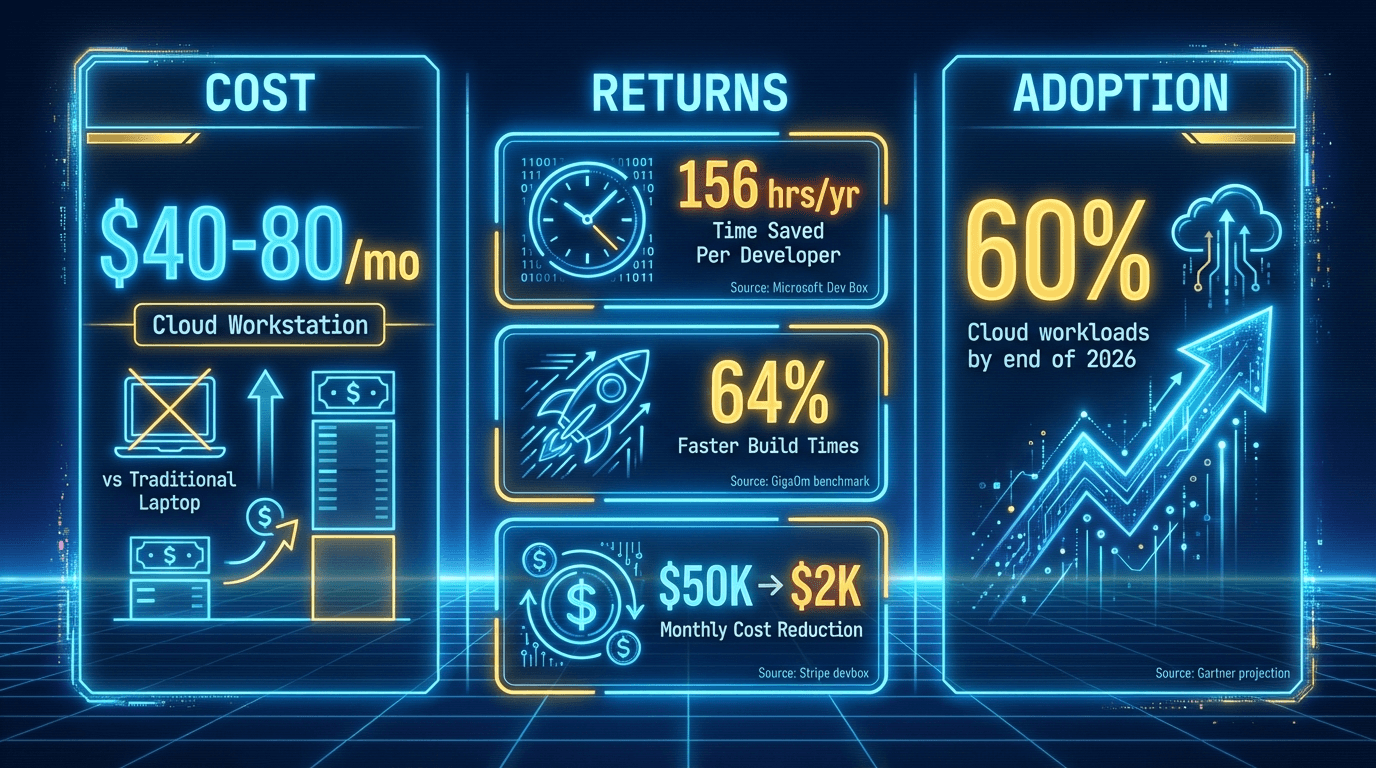

The economics are hard to argue with. A self-managed VPS starts as low as $20/month; managed environments like Codespaces run $40-80/month; enterprise solutions like Dev Box or WorkSpaces run $100-150/month. Microsoft found Dev Box saves 156 hours per developer per year. GigaOm benchmarked 64% faster build times versus laptops. Stripe estimates their infrastructure replaces roughly $50,000/month in engineering labor for $2,000/month in compute.

Forget “works on my machine.” The future is cloud-native dev environments.

— Microsoft Azure Developers (@MSAzureDev) October 31, 2025

Microsoft Dev Box + GitHub Codespaces = faster onboarding, fewer headaches, and scalable workflows.

Learn more 👉 https://t.co/IJHCdYunO8#CloudDevelopment #DevOps pic.twitter.com/tFhaBuJs3t

Here's why this matters beyond developers: agents that schedule tasks, monitor systems, and execute overnight can't do that on a machine that sleeps when you close the lid. When I run agent swarms, my laptop fans sound like a runway. Knowledge workers need a workshop they can leave running, not a clean room they have to be physically present in.

Gartner projects 60% of cloud workloads will be built using cloud development environments by end of 2026. These aren't developer environments anymore. They're agent environments waiting to happen.

And once you make that shift -- once the compute lives somewhere persistent and the agents run there instead of on your local machine -- something unexpected happens.

The Part Where Your Device Stops Mattering

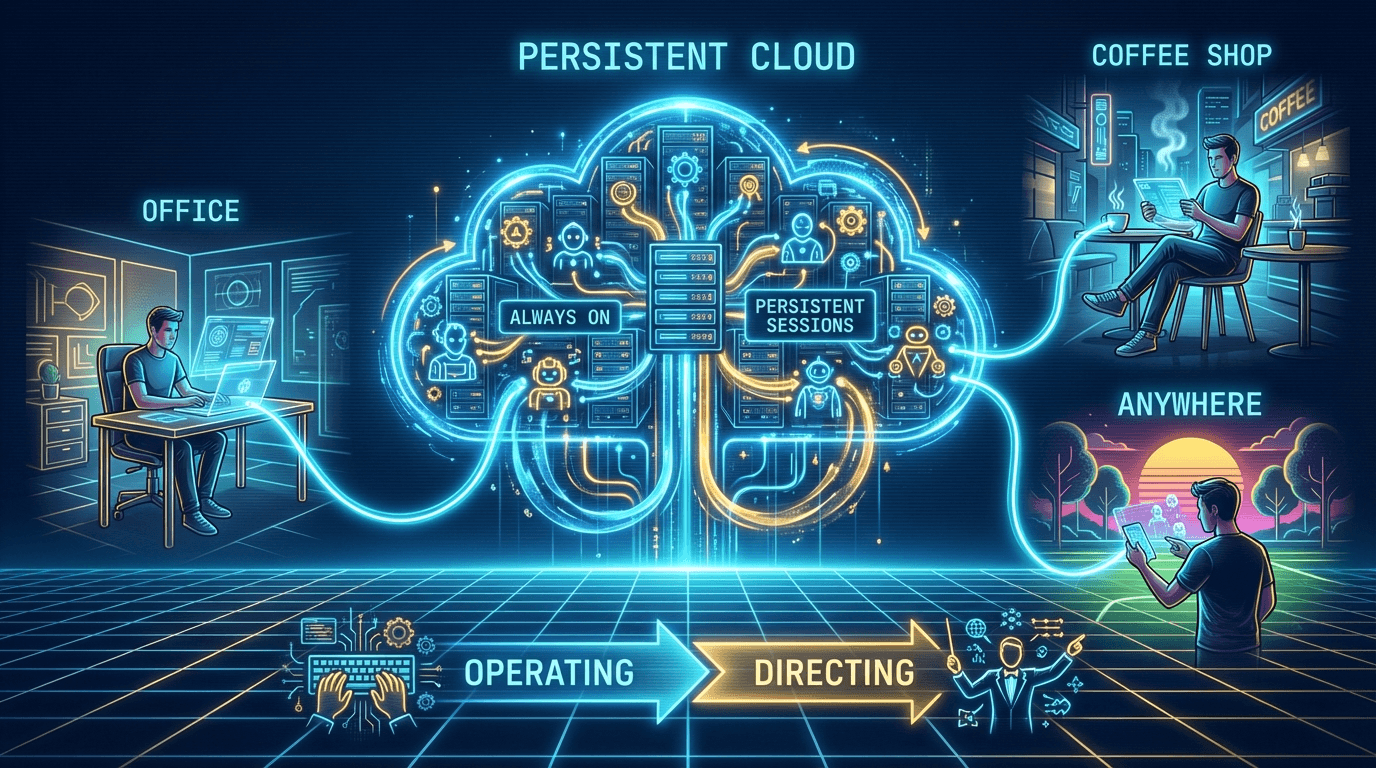

Once I moved my agent infrastructure off my laptop and into a virtual environment, something shifted that I wasn't planning for. The device in front of me stopped being the bottleneck.

I'm at my kid's soccer game on a Saturday. Phone in hand. I can kick off a research task, check the output of something I started that morning. Not because my phone became a development workstation -- but because I'm not the one executing anymore. The agents are. They're running in a persistent environment that doesn't care whether I'm directing from a laptop, an iPad at a coffee shop, or a phone in the bleachers.

The shift is profound: you go from operating your tools to directing your agents. You're describing outcomes and reviewing results, not clicking through menus or waiting for local builds.

I've started calling this "agent whispering," which sounds ridiculous, and I'm aware it sounds ridiculous. But when execution lives in the cloud, your device, your location, your screen real estate -- all of it matters dramatically less.

The most capable version of you isn't the one sitting at a desk with three monitors. It's the one who has set up infrastructure that works whether you're at that desk or not.

I should show my receipts. I'm running a VPS with an agent infrastructure layer on top. Multiple agents, persistent sessions, scheduling. It's not pretty -- the monitoring is held together with the digital equivalent of duct tape and optimism. But the productivity unlock is real. I regularly kick off multi-agent workflows that need to run for hours and keep going after I close the lid and walk away.

A business person with no computer science background can set this up. It's not free, and it's not trivial, but the barrier is measured in days, not semesters. If I can do it, the argument that "this is only for engineers" needs updating.

What Needs to Happen

Here's what I think needs to happen. Not as a mandate -- I'm a power user with strong opinions, not an authority -- but as an invitation.

To Leadership: Rethink Both Policy and Infrastructure

First: policy. Revisit the blanket bans. Ask your IT teams when those policies were last evaluated against the current landscape. If the answer is "before AI agents existed," that's your signal.

Second: infrastructure. Give knowledge workers sandboxed compute. Not full server access. Not root on production. A safe space where agents can run scripts and execute workflows without touching anything they shouldn't. The cost starts as low as $20/month per employee -- less than most software license bundles you're already paying for.

Your people are already doing this on personal devices and shadow accounts. The question is whether you'd rather it happen inside your security perimeter or outside it.

To IT: Be the Architect, Not the Gatekeeper

The organizations that get this right will have IT teams who design the sandbox environments, configure the guardrails, and build the audit trails. That's architecture work, not gatekeeping. It's harder, more valuable, and honestly more interesting than maintaining block lists.

Start small. A sandboxed container for a willing team. Docker-based isolation. Audit logging from day one. Expand what works.

To Employees: Get Uncomfortable

This one's for the person reading this who thinks, "My company will never allow this." Maybe. But maybe it's worth raising the question.

The Shopify framing is the one I keep coming back to: "Hey, we're likely going to do this. How can we do it safely?" That's not confrontational. That's collaborative. It positions you as someone who wants to move forward with IT, not around them.

In the meantime, get familiar with what's possible. Try a CLI tool on your personal machine. Spin up a free-tier cloud environment on a weekend. The gap between "I've heard about this" and "I've tried this" is where conviction lives. Nobody is going to hand you this capability. You'll need to advocate for it.

The Other Half of the Equation

In The Missing Protocols, I made the case for giving agents standardized APIs and protocols -- the missing primitives that let agent tools talk to each other and to your systems. This article is the other half of that argument.

APIs without script execution are suggestions -- an agent looks up information, formats a response, and hands it back to you for manual action. APIs with a script execution environment are results -- the agent processes, transforms, feeds into the next step, and delivers an outcome. The difference between "here's what you could do" and "here's what I did" is an execution environment.

If that article was about giving agents a voice, this one is about giving them hands.

I'm still working through all of this. The infrastructure is evolving, the security frameworks are maturing, and I'm learning something new roughly every week. If your organization is navigating this transition -- or if you think I've got something wrong -- I'd genuinely like to hear about it.

Find me on X at @SelectStarKyle or check out ForgeVista.

Also published on